On February 5, Anthropic released Claude Opus 4.6, its most powerful AI model. Among the model’s new features is the ability to coordinate teams of autonomous agents – multiple AIs that divide up the work and complete it in parallel. Twelve days after the release of Opus 4.6, the company dropped Sonnet 4.6, a cheaper model that almost matches Opus’s coding and computing capabilities. In late 2024, when Anthropic first introduced models that could control computers, they could barely use a web browser. Now Sonnet 4.6 can navigate web applications and fill out forms with human-level capability, according to Anthropic. And both models have a working memory large enough to hold a small library.

Enterprise customers now make up about 80 percent of Anthropic’s revenue, and the company closed a $30 billion funding round last week at a $380 billion valuation. By any measure available, Anthropic is one of the fastest-scaling technology companies in history.

But behind the big product launches and valuations, Anthropic faces a serious threat: the Pentagon has signaled that it can designating the company as a “supply chain risk” — a label more often associated with foreign adversaries — unless it drops restrictions on military use. Such a designation could effectively force Pentagon contractors to deprive Claude of sensitive work.

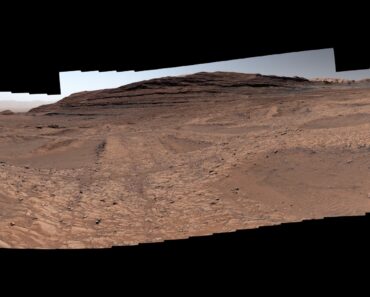

Tensions boiled over after January 3, when US special operations forces raided Venezuela and captured Nicolás Maduro. The Wall Street Journal reported that forces used Claude during the operation via Anthropic’s partnership with defense contractor Palantir — and Axios reported that the episode escalated an already fraught negotiation over exactly what Claude could be used for. When an Anthropic leader contacted Palantir to ask if the technology had been used in the raid, the question raised immediate alarm at the Pentagon. (Anthropic has disputed that the outreach was meant to signal disapproval of a specific operation.) Defense Secretary Pete Hegseth is “close” to severing the relationship, a senior administration official told Axios, adding, “We will see to it that they pay a price for forcing our hand in this way.”

The collision raises a question: Can a company founded to prevent AI catastrophe hold its ethical lines when its most powerful tools—autonomous agents capable of processing vast data sets, identifying patterns and acting on their conclusions—run on classified military networks? Is a “safety first” AI compatible with a client who wants systems that can reason, plan and act on their own on a military scale?

Anthropic has drawn two red lines: no mass surveillance of Americans and no fully autonomous weapons. CEO Dario Amodi has said Anthropic will support “national defense in every way except those that would make us more like our autocratic adversaries.” Other major labs — OpenAI, Google and xAI — have agreed to loosen security measures for use in the Pentagon’s unclassified systems, but their tools don’t yet run inside the military’s classified network. The Pentagon has demanded that AI be available for “all lawful purposes”.

The friction tests Anthropic’s central mission. The company was founded in 2021 by former OpenAI executives who believed that the industry was not taking security seriously. They positioned Claude as the ethical alternative. In late 2024, Anthropic made Claude available on a Palantir platform with a cloud security level up to “secret”—making Claude, by public accounts, the first major language model operating in classified systems.

The question the distance now forces is whether security is only a coherent identity when a technology is embedded in classified military operations and whether red lines are actually possible. “These words seem simple: illegal surveillance of Americans,” says Emelia Probasco, a senior fellow at Georgetown’s Center on Security and Emerging Technologies. “But when you get down to it, there are whole armies of lawyers trying to figure out how to interpret that phrase.”

Consider the precedent. After the Edward Snowden revelations, the US government defended the bulk collection of telephone metadata – who called whom, when and for how long – arguing that this type of data did not have the same privacy protections as the content of conversations. The privacy debate was then about human analysts searching these records. Now imagine an AI system that queries large datasets – mapping networks, discovering patterns, flagging people of interest. The legal framework we have was built for an era of human judgement, not machine-scale analysis.

How about we have security and national security?

Emelia Probasco, senior fellow at Georgetown’s Center for Security and Emerging Technology

“In one sense, any kind of mass data collection that you ask an AI to look at is mass surveillance by simple definition,” says Peter Asaro, co-founder of the International Committee for Robot Arms Control. Axios reported that the top official “argued that there is a significant gray area around” Anthropic’s restrictions “and that it is impractical for the Pentagon to have to negotiate individual use cases with” the company. Asaro offers two readings of the complaint. The generous interpretation is that surveillance is truly impossible to define in the age of AI. The pessimistic one, Asaro says, is that “they really want to use those for mass surveillance and autonomous weapons and don’t want to say that, so they call it a gray area.”

As for Anthropic’s other red line, autonomous weapons, the definition is narrow enough to be manageable — systems that select and engage targets without human oversight. But Asaro sees a more troubling gray area. He points to the Israeli military’s Lavender and Gospel systems, which have been reported to use AI to generate massive target lists that go to a human operator for approval before the strikes are carried out. “You’ve essentially automated the targeting element, which is something (that) we’re very keen on and (that is) closely related, although it falls outside the narrow strict definition,” he says. The question is whether Claude, operating inside Palantir’s systems on classified networks, can do something similar—process intelligence, identify patterns, see people of interest—without anyone at Anthropic being able to say exactly where the analytical work ends and the targeting begins.

The Maduro operation is testing just that distinction. “If you collect data and intelligence to identify targets, but humans decide, ‘Okay, this is the list of targets we’re actually going to bomb’ — then you have the level of human oversight we’re trying to require,” Asaro says. “On the other hand, you still become dependent on these AIs to select these targets, and how much investigation and how much digging into the validity or legality of those targets is a separate question.”

Anthropic may be trying to draw the line narrower – between mission planning, where Claude can help identify bomb targets, and the mundane work of processing documentation. “There are all these boring applications of large language models,” says Probasco.

But the capabilities of Anthropic’s models can make these differences difficult to maintain. Opus 4.6’s agent teams can share a complex task and work in parallel—an advance in autonomous computing that could transform military intelligence. Both Opus and Sonnet can navigate applications, fill out forms and work across platforms with minimal supervision. These qualities that drive Anthropic’s commercial dominance are what make Claude so attractive in a classifieds network. A model with a huge working memory can also contain an entire intelligence file. A system that can coordinate autonomous agents to debug a codebase can coordinate them to map a rogue supply chain. The more skilled Claude becomes, the thinner the line between the analytical grunt work Anthropic is willing to support and the surveillance and targeting it has vowed to deny.

As Anthropic pushes the boundaries of autonomous AI, the military’s demand for these tools will only increase. Probasco fears that the clash with the Pentagon creates a false binary between security and national security. “How about we have security and national security?” she asks.

This article was first published at Scientific American. © ScientificAmerican.com. All rights reserved. Follow along TikTok and Instagram, X and Facebook.